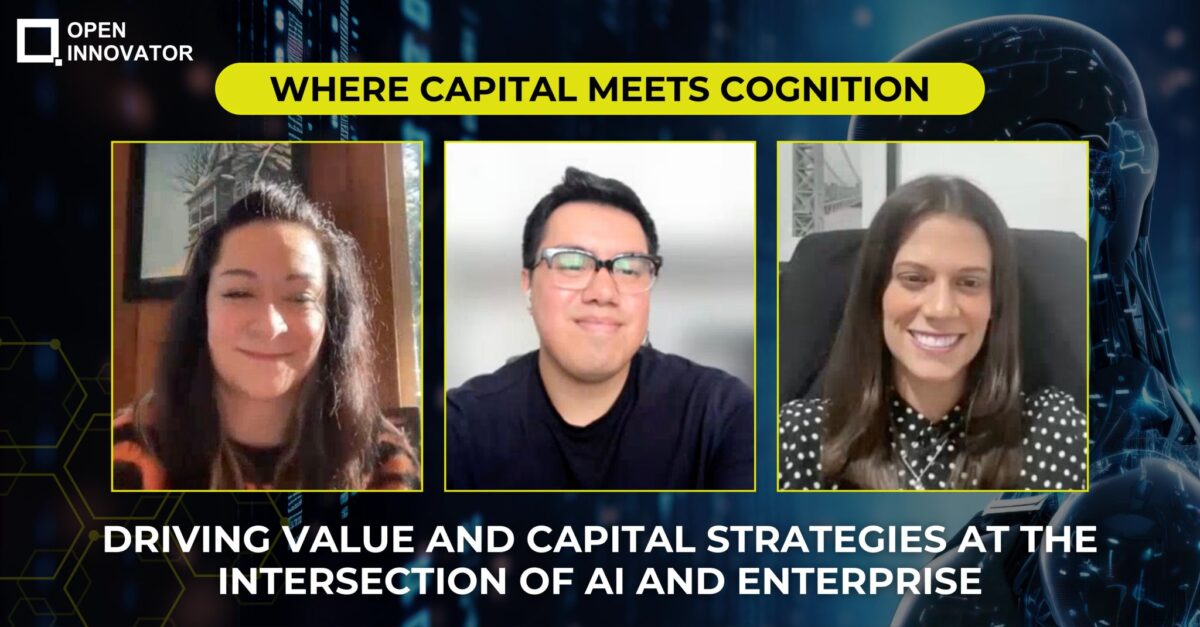

Open Innovator Knowledge Session | January 30, 2026

Open Innovator organized a no-holds-barred knowledge session on “From Hype to Cash Flow: What Will Actually Make Money in AI by 2026” on January 30, 2026, cutting through the noise to address the market’s critical pivot point. As moderator Naman Kothari put it: “Everyone is an AI expert in today’s world. We have reached peak hype.” But as 2026 unfolds, the market has developed low tolerance for potential and high hunger for profit.

The gold rush is over; we’re in the settlement phase where nobody cares how cool your algorithm is if your cash flow is stuck in a tailspin. This candid session brought together three investors and growth strategists who literally spend their days separating signal from noise, steering companies through high-stakes waters where the question isn’t about the technology—it’s about whether the same old business logic dressed in a new hoodie can actually generate sustainable revenue.

Expert Panel

The session convened three experts who evaluate AI investments from distinctly different but complementary perspectives:

Deborah Boechat – Founder & CEO of Onit Center, bringing over 10 years of global experience helping startups and scaleups turn innovation into revenue through international expansion across the US, Latin America, Europe, and Asia Pacific, with strategic growth and capital connections that bridge four continents.

Carolina Castilla – Creator of the world’s first Artificial Intelligence Awareness Experiment (Love My Robot, Inc.) and Venture Capitalist at VC Lab, bringing culture, capital, and consciousness into the AI economy. An electronic music producer who uses performances as a “Trojan horse” to access Fortune 100 meetings, Carolina has developed AI-powered risk assessment tools that analyze startup viability in 30 seconds based on 100 questions VCs actually ask.

Hugo Lara – Investment Associate at BDev Ventures, backing Seed to Series B B2B software companies with real traction and a relentless focus on revenue generation, specifically targeting companies at the half-million ARR mark who are ready to scale to $5-10 million.

The discussion was moderated by Naman Kothari from NASSCOM, who framed the central challenge: “The era of cheap capital and high hype is over. We are now in the era of cash flow.”

The Litmus Test: Real Revenue Driver or Shiny Distraction?

The Founder’s Dilemma: People vs. AI Tools

Deborah opened with a fundamental tension she observes across continents: founders struggling between hiring more people or substituting them with AI tools that might perform similarly.

“Working with founders in their decision-making process around growth, I notice there’s a struggle: Should I hire more people or substitute that amount of people for a tech or AI tool that could perform in a maybe similar way?”

Her litmus test centers on understanding the business model and cash flow internally. The critical question: Is an AI tool a suitable solution, or can existing team members handle it?

“From a realistic standpoint, founders need good balance. Everyone wants to go for AI—’Show me what’s trending right now in AI that can help me solve this problem.’ But technology is a tool, a great guidance. There’s a need to keep balance between both, respecting budget.”

Budget respect is paramount, especially for companies seeking funding. “Technology is globalized—even though it’s different demographics and geographies, at the end of the day, it’s a similar struggle or decision-making process.”

The 30-Second Verdict: Can AI Replace Investor Intuition?

Carolina brought a provocative perspective: she can assess investment readiness in 30 seconds using AI analysis of pitch decks, powered by research collected from interviewing VCs at startup competitions.

“I started interviewing VCs and collecting data—I got 100 questions that VCs ask to know if a startup is going to succeed. I sat with my CTO and started prompting four years ago. Now I can get a pitch deck and say, ‘OK, these are the red flags or green flags of this startup.'”

But she immediately qualified this capability: “That doesn’t mean anything. I’m a general manager of a fund. My responsibility is make money for my investors, make my startups successful. But my real mission is asking: How do you personally separate innovation that improves human life from innovation that just accelerates everything, focusing on profits without taking care of workers who helped build the system?”

Her investment philosophy: “I follow founders with compelling value proposition. Obviously cash flow is the most important, but the question is more about human nature—where is this going and why are we putting so much into this?”

Integration Over Transformation: The Sustainable Path

Hugo provided the clearest framework for distinguishing temporary spikes from sustainable paths to $5-10 million cash flow.

“Ask the fundamental question: Is AI actually needed here, or is this just a nice-to-have that will pass at some point?”

He emphasized this is especially critical for companies targeting enterprise segments, where deals can be large and create the illusion of rapid traction.

The Integration Principle: “What I believe is the type of approach that looks to integrate will stick better than the type of approach that tries to transform the whole process. Look at it like trying to convert a combustion gas car into an EV—also while the car is moving. That’s going to be very hard. It’s going to sound promising, but companies rarely have time to wait for all that disruption to happen.”

His prescription: “When companies try to integrate into current discussions—pricing, churn, revenue growth—decisions that have mattered since the beginning, if you make your way into those conversations and are consistent about delivering results, that has better chance to stick past the initial early adopters.”

The warning: Many companies at the hype peak promised complete transformations of critical processes like sales and procurement—strategic areas that are extremely hard to change in short windows. “That’s why you hear headlines about ‘AI is not returning anything on investment’—because many ideas started with trying to change the way business is done instead of integrating into the way business is actually carried out nowadays.”

The Moats and Misfires: Where Investors See Value

Will AI Augment Capital Decisions or Will Human Judgment Remain the Final Moat?

Carolina’s answer was unequivocal but nuanced: “I will not call it intuition. AI helps me—I get a pitch deck and in 30 seconds I know if I’m introducing this startup to my advisors. But that is just an assessment. The real thing about investing is human and is responsibility.”

She outlined what AI can and cannot do:

What AI Can Do:

- Save time understanding a business, company, founder, team, readiness, business plan, competitive advantage, compelling value proposition

- Provide readiness scores and assessments

What AI Cannot Do:

- Provide responsibility (“The founder gets the money and goes to Hawaii to party”)

- Replace due diligence teams that verify data accuracy

- Ensure founder tenacity to respond to boards and continue raising money

Her perspective on the CEO role: “Everybody loves their startups, doing their products, doing demos. But the CEO life is not that—you get a team to do all that. You just keep knocking doors and raise money for the rest of your startup’s life.”

Bottom line: “Yes, we can get some readiness, but no, AI doesn’t give us responsibility.”

The Phantom Growth Trap: When Momentum Isn’t Scalable

Hugo identified the most common false confidence at the $500K+ ARR stage: “Founders often feel like they’ve nailed sales. When you talk to them, they actually don’t have a playbook—their sales is still founder-led and network-driven.”

This is perfectly fine, but it’s often not scalable or repeatable. “If selling depends on you being at the right place at the right time, talking to the right person, that’s very hard to replicate at scale. It can be a way of building a business, but not necessarily one that will generate double-digit growth, which is what you need for venture-backable companies.”

BDev Ventures specifically looks for $1 million+ ARR because “we back-tested it and found this is where companies usually have a go-to-market strategy that’s not evolving very fast and a sales team that can replicate the playbook.”

But the verification is critical: “Sometimes that’s not the case, and that’s certainly one of the most common false positives I find in day-to-day conversations.”

Global Expansion: Strength or Distraction?

Deborah brought crucial perspective on when international growth strengthens cash flow versus when it becomes a distraction from monetization.

The Right Stage to Expand:

- The company has matured in their current market

- They have a defined ICP (Ideal Customer Profile)—they know exactly who their client is

- They have cash flow and revenue coming in

- The business model is proven, sells well, and is scalable

The Critical Analysis: “If Company X was able to grow in that specific market, if they dominate what they’re doing from a scalable perspective even though it’s local, that’s good momentum to start looking outside. Look at other markets where your ICP has similar behavior.”

Important Considerations Beyond Finding Customers:

- Some countries/regions have barriers to entry

- Understand the new area or region thoroughly

- The purpose is more clients, opportunities, and revenue—not just a selling point like “we’re operating in this country”

Deborah’s warning: “If they’re not being profitable, it’s just not effective. It’s better for these companies to stay where they are and shore up before expanding to a new region.”

Her formula: “It’s all about good timing and understanding the numbers. Cash is king—it’s important to respect timing and money.”

Practical Investment Criteria: What Actually Matters

How to Decide: Double Down or Write Off?

When asked how to decide whether to double down on an AI investment or write it off as sunk cost, Hugo emphasized: “You really have to take a very close look at who’s actually winning.”

While cash flow is the lifeblood, for most of the growth stage “you’re going to look at how fast revenue is growing—that’s the first thing I would look at to prove the bets they’re making are paying off.”

The deeper analysis: “As Deborah mentioned, if growth came from a bet placed on global expansion and it actually paid out, that’s talking about not only revenue growth but the execution of something as complex as going to another country and actually selling. Those are signals about the team, not just superficial stages or numbers.”

Hugo’s framework: “There has to be a story and narrative behind the success that you believe will continue. That’s where you double down. The contrary is where you’d be more cautious—about companies making bets that aren’t paying off one after the other.”

Building Models vs. Solving Real Problems

When asked whether investors prefer AI companies building models or applying AI to solve real business problems, Carolina identified a nuanced trade-off:

The Risk of Building on Existing Platforms: “If you just build your agents or things on top of Anthropic or OpenAI, there is a risk.”

The Attraction of Proprietary LLMs: “Obviously you’re more attractive if you’re building your own LLM. But now, competing with billion and trillion-dollar companies like OpenAI is impossible.”

What She Actually Looks For:

- Privacy by design

- Auditability

- Bias testing

- Strong security

- Business models that don’t depend on exploiting attention or selling data

Carolina’s fund structure reflects this philosophy: “We’re investing seed in AI for enterprise, but we left 20% of the fund for AI that invests in the creator economy.”

Her stance on generative AI: “I’m totally against Gen AI and the tokenization model. I like to invest in businesses where the business model is not exploiting.”

Rapid Fire Insights: Gut Reactions from the Front Lines

Founder-Market Fit vs. Aggressive Unit Economics

Hugo’s answer: Founder-market fit.

His reasoning: “I find it has been more durable over time than unit economics. Unit economics becoming so important over the last 10 years is more a product of markets not being able to keep up with amounts of cash needed in new rounds.”

He provided historical context: Ten years ago, a Series A would be $3 million. “Nowadays a Series A can be upwards of $50 million. That’s why investors require companies not only to go for the full hike at all costs, but to make camp whenever possible—to look at if they’re making money, which you wouldn’t see in the early 2000s or even the 90s.”

The constant across time: “What you will see is always: who are you investing in?”

Can AI Become a Better Limited Partner Than Humans?

Carolina’s answer: False.

Her 15-second defense: “AI is prompted by a human. The human has mistakes by itself. I prompted AI when I was at a very big company, and the AI could think what I was prompting was correct. But I could probably be mistaken. So no, false.”

Dominating One Market vs. Being Average Across Three Continents

Deborah’s answer: Average across three countries.

Her reasoning: “Simply because when you’re in three different economies, worst-case scenario, if there’s a crash in one economy, you still have two to handle your business as an option.”

Will the AI Bubble Burst Before End of 2026?

Unanimous answer: No.

All three panelists agreed the AI bubble will not burst before 2026 ends, though Deborah qualified: “Not that soon.”

Green Flags: What Gets Investors Excited

Deborah: The Global Powerhouse DNA

Green flags that signal a global powerhouse, not just a tool seller:

- They have clients and revenue coming in

- Product-market fit is established – they understand exactly who their customer is

- With that configuration properly understood, scaling becomes easier

Deborah’s summary: “Definitely great flags: #1, do they have clients? If yes, they’re getting started. But certainly product-market fit—that’s definitely a path to scalability.”

On MVP stage specifically: “Do you have demand? Do you have people who are going to purchase your solution? It’s important to understand to which point AI is going to help or substitute humans. That’s something investors look at: How far can you go without our money? If you can make a lot of sales with less effort, that’s something investors will look at.”

Carolina: Compelling Value Proposition Wins

When asked what signal her AI picks up that humans might miss that makes her lean forward, Carolina’s answer was simple and direct: “Compelling value proposition.”

Her AI analyzes 100 questions to answer one prompt: “Is this startup going to succeed?” The tool came from organizing Startup World Cup regionals with access to the best startups and judges globally.

The workflow: “In 30 seconds it tells me how ready this pitch is for due diligence. That doesn’t mean what they say is real—there needs to be a person looking at data, team, resume, how they manage finances. It’s just a tool that tells me, ‘OK, this is a startup worth faster introduction than the other one.'”

Her cautionary tale: She once had a founder claim $30 million in signed MOUs in a pitch. “They fooled all the judges. When we went into diligence, everything was a lie. So I get data from the pitch—’Oh, what an amazing startup.’ Let’s get the meeting. ‘Oh, this is not a breakthrough. The LLM is not proprietary—they took 60% of OpenAI.'”

The conclusion: “AI can analyze data that humans present, but there’s due diligence and responsibility from the GM, investor, and founder to organize a business that’s good for the world, the team, the investors, and all stakeholders.”

Hugo: Trust Built Through Long Conversations

Beyond ARR and spreadsheets, Hugo looks for behavioral green flags—specifically how founders talk about cash flow.

“Now that AI is helping us do most manual processes at VC funds to save time, I’m sure from today until probably end of this year, most funds will switch to spending 90-95% of their time building trust with founders they’re looking to invest in and nurturing those relationships.”

Why? “At the end of the day, it’s like marrying for at least 10 years with a company. You need to be sure you’re investing in people you trust.”

What stands out in deals:

- Who’s the founder? What have they done before?

- How much do they actually know their business?

- Can they articulate a narrative beyond ‘give me this money and I’ll generate this result’?

- Can they go into the workings? Point out assumptions and levers that need to be pulled?

Hugo’s honest assessment: “I’ve had answers like, ‘I will work harder than the competition,’ and I’m sure they will—but that’s very hard for me to buy.”

BDev Ventures’ process reflects this: “Our investment process is very long—three to five months. But for the 60+ companies in our portfolio, that’s been beneficial. We’ve been working together for a good amount of time and know each other very well. Founders know if we’re the right investor; we’ve built enough conviction to make a decision.”

His conclusion: “As much as the market pushes for faster due diligence, I think it’s always going to be an investment in people. My green flag would be that I trust the person across the screen.”

The Five-Year Outlook: Infrastructure, Applications, or Tools?

When asked what type of AI investment will give the highest returns over the next five years, the panel offered varied but infrastructure-leaning perspectives:

Carolina: “I feel mobility could be a big one.”

Hugo: “I think infrastructure—but the infrastructure that could make a big difference. I’m not sure all VC funds will be able to invest in that, but I do think it’s a type of technology that could change people’s lives.”

Deborah: “I would also go with Hugo on infrastructure. I feel like it’s a good momentum. To consider five years from now with the evolution of technology and AI tools—we’re at the very beginning. As years go by, people are just going to think out-of-the-box and bring in solutions.”

Key Takeaways for 2026

1. Balance Is Everything

Technology is a tool and great guidance, but founders need balance between AI solutions and human capabilities, always respecting budget constraints.

2. Integration Beats Transformation

AI approaches that integrate into current business processes (pricing, churn, revenue growth) stick better than those promising complete transformations of strategic functions.

3. Cash Flow Is Still King

In 2026, the market has low tolerance for potential and high hunger for profit. Durability of the problem being solved matters more than algorithmic sophistication.

4. Founder-Market Fit Endures

While unit economics matter, founder-market fit has proven more durable over time. Investors are spending 90-95% of their time building trust with founders.

5. Scalability Requires More Than Founder-Led Sales

At $500K+ ARR, the most common false confidence is thinking you’ve “nailed sales” when it’s still founder-led and network-driven—hard to replicate at scale.

6. Global Expansion Timing Is Critical

Expand only when you’ve dominated your current market with proven ICP, cash flow, and scalable business model. Otherwise, it’s a distraction from monetization.

7. AI Cannot Replace Due Diligence Responsibility

AI can assess readiness in 30 seconds, but it cannot verify data accuracy, ensure founder tenacity, or guarantee they won’t go to Hawaii instead of executing.

8. Compelling Value Proposition Remains Supreme

Despite all the AI analysis tools, the fundamental question remains: Does this solve a real problem in a way customers will pay for consistently?

Conclusion: From Gold Rush to Settlement Phase

As Naman concluded: “The AI gold rush is moving into its most important phase—the phase of accountability.”

The unified message from all three investors: “In 2026, the market isn’t looking for the most advanced AI. It’s looking for the most indispensable business.”

From Deborah’s insights on global expansion timing to Carolina’s perspective on augmented decision-making while maintaining human responsibility, to Hugo’s warnings about phantom growth traps, the consensus is clear: Stop looking at valuation. Start looking at value.

The era of cheap capital and AI labels on every pitch deck is over. The settlement phase has begun, and in this phase, the question isn’t whether your algorithm is cool—it’s whether your business logic actually generates predictable, sustainable cash flow.

This Open Innovator Knowledge Session delivered a pragmatic master class on separating AI signal from noise in 2026. Deep appreciation to the expert panel—Deborah Boechat (Onit Center), Carolina Castilla (VC Lab/Love My Robot), and Hugo Lara (BDev Ventures)—for cutting through the hype and providing the real-world math on what will actually make money in AI.