Open Innovator Knowledge Session | January 27, 2026

Open Innovator organized a critical knowledge session on “The AI Arms Race: Defense vs. Offense” on January 27, 2026, addressing one of the most urgent challenges facing organizations today: the exponential acceleration of AI-powered cyber threats.

With Gartner reporting that cyber attacks now occur every 39 seconds—meaning five companies worldwide face breach attempts during a typical opening introduction—the session explored a stark reality: we are no longer worried about hackers in basements or even organized crime, but about code that doesn’t sleep, doesn’t blink, and operates at speeds human brains cannot perceive.

The panel examined whether AI-driven defenses have finally given organizations a defender’s advantage, or whether we’re simply building taller walls for increasingly sophisticated attackers wielding autonomous digital weapons.

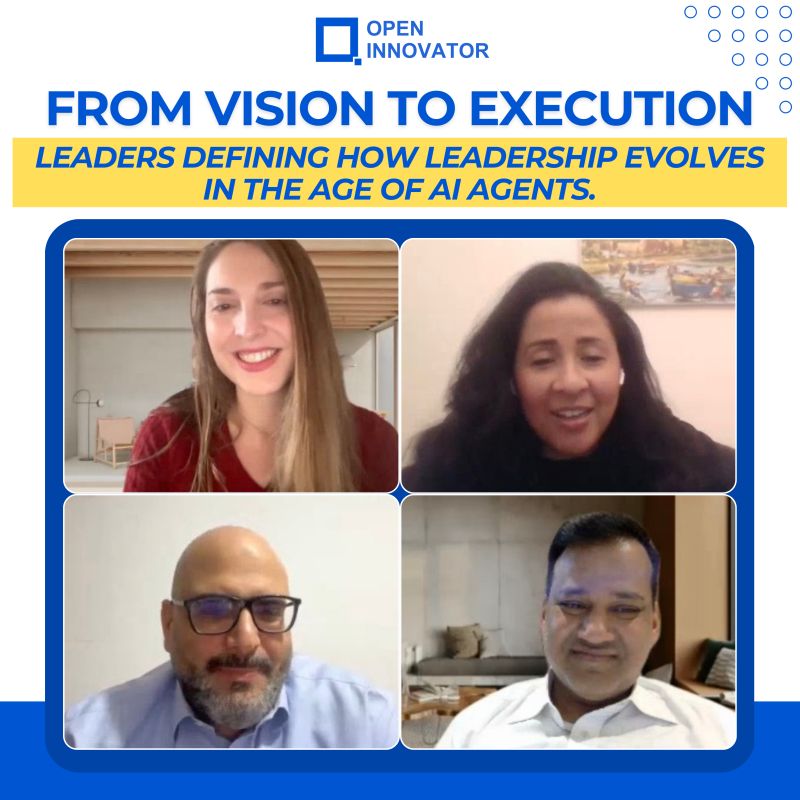

Expert Panel

The session convened four cybersecurity leaders—dubbed the “AI Avengers”—bringing expertise from infrastructure security, governance, zero-trust architecture, and enterprise AI deployment:

Clen C Richard – “The Zero Trust Visionary,” multi-award-winning strategist who builds digital immune systems, specializing in environments where trust is never assumed and verification is continuous—even when the entity requesting access looks and sounds exactly like your CEO.

Rudy Shoushany – “The Governance Architect,” Forbes Tech Council veteran who translates cybersecurity from an IT line item into the boardroom’s digital transformation insurance policy, bridging the gap between technical reality and executive decision-making.

Ella Türümina – “The AI Readiness Architect” at Siemens and founder of her own AI consulting practice, serving as the bridge between big ambition and big protection, ensuring enterprise AI scaling doesn’t inadvertently leave backdoors open for autonomous intruders.

Fadi Adam – “The Infrastructure Sentinel,” CEH-certified professional who has witnessed the zero moments of corporate security evolution firsthand, ensuring that when AI battles commence, the foundational infrastructure doesn’t just hold—it fights back.

The discussion was expertly moderated by Naman Kothari from NASSCOM, who framed the critical challenge: “We are literally bringing human brains to a machine gun fight.”

The Arms Race Reality: Speed as the New Currency

Naman opened with alarming statistics that set the urgency level:

- AI-driven phishing attacks have spiked 1,200% in recent months

- Attacks now happen in milliseconds while traditional human response times are measured in hours

- 76% of organizations admit they’re struggling to keep pace with AI-powered attack speeds

- The threat has evolved from Nigerian prince emails to deepfake CEOs on Zoom calls requesting urgent wire transfers

The fundamental question: Has the defender finally gained an advantage, or are we just building more sophisticated defenses for even more sophisticated attacks?

Round One: The Defender’s Advantage—Real or Illusion?

Zero Trust: Coverage Gaps Remain Critical

Clen Richard opened with a sobering reality check: “The defender’s advantage is there, but the asymmetry problem still exists. As defenders, we have to be right 100% of the time. Attackers only have to be right once.”

He highlighted a critical gap: even with AI deployed for threat defense, only 18% of the attack surface is currently covered. The remaining 82% remains vulnerable, demanding urgent attention.

For AI agents specifically, Clen outlined a three-layer verification model essential for zero-trust environments:

Layer 1: Identity – “Who are you?” New frameworks like SPIFFE and Spire use short-lived tokens and auto-credentials to continuously verify AI agent identities.

Layer 2: Behavioral Drift Detection – “What are you becoming?” As AI agents evolve and touch more systems, organizations must detect abnormal patterns and drifts from expected behavior.

Layer 3: Intent Analysis – “Why are you acting like this?” The Explainable AI Index (AEI) helps determine at what confidence level machines should act autonomously, requiring AI to justify decisions and explain why signals were considered malicious.

Clen’s verdict: “Speed is definitely a multiplier, and both attackers and defenders have the advantage. But at the end of the day, it’s the overall maturity in how you handle this that gives defenders the advantage.”

Governance: The Tug of War Where Attackers Stay Ahead

Rudy Shoushany brought the governance perspective with stark honesty: “It’s a tug of war. Winning is—I won’t say we’re not succeeding, but with smart AI and automation, vibe coding has become accessible to all. We’re seeing new attacks where AI itself develops attacks, not just humans anymore.”

He identified a critical escalation: AI agents are now creating their own attacks, moving beyond human-directed threats. With this advancement, attackers aren’t just one step ahead—they’re two or three steps more advanced.

The governance gap is severe. Despite frameworks existing on paper, Rudy noted: “When you put it on the ground, the attacker has always been one step ahead. And now with AI involved, it’s maybe 2 steps or even 3 steps more advanced.”

A disturbing reality persists: “We talk with senior management, and unfortunately, many of them still don’t take the cybersecurity aspect as serious as it is and should be—even with all the bad experiences they’ve faced.”

Rudy pointed to vibe coding as an example of governance failure: organizations allow the technology without proper testing frameworks, creating “a new kind of vulnerabilities in the governance itself.”

His call to action: Management must act faster, more proactively, and “more viciously in a defense perspective,” potentially implementing blue team/red team methodologies to constantly work toward strong governance.

Enterprise Reality: Speed Meets Scale Challenges

Ella Türümina brought practical insights from working with Siemens-scale enterprises and her own AI consulting practice launched in 2025. Her philosophy: move from “progress to innovation” by first evaluating whether organizations truly need AI or if automation would suffice, then building ecosystems that satisfy ROI ambitions.

“Not building taller walls, but bringing defense in other dimensions and making governance sexy again,” Ella explained, noting that enterprise scale offers both advantages and challenges.

The upside: When a CEO issues a directive in a large organization, implementation happens universally. “If your CEO addresses the science, the circular which everyone has to implement from today evening, then people just do it.”

The downside: Global enterprises span cultures, regions, and tools, making rollout time-consuming. However, with proper lean management and change management, “people also have fire in their eyes and they want to go through with you.”

Ella emphasized a three-layer pyramid approach: Governance first, then architecture, then deployment with continuous monitoring. The monitoring is critical—tracking AI performance against KPIs and swapping models when performance degrades.

Infrastructure: The Foundation Must Fight Back

Fadi Adam brought the conversation to the technical foundation, emphasizing that AI enhances compliance with frameworks like GDPR, HIPAA, and PCI, but only when organizations actually follow these regulations.

His core belief: “AI is a tool to enhance. We cannot just replace humans. You cannot replace humans.”

Fadi warned against the dangerous assumption that AI tools can simply replace human security professionals: “Most companies think ‘I will buy this AI tool to do the job of a human,’ but after some time they have breach or loophole they cannot close because AI will not support full automation.”

He stressed several critical practices:

- Zero trust always – “Never trust, always verify, revalidations”

- Patch accurately and test before going live – citing the CrowdStrike incident where untested patches caused massive system failures

- Test patches outside working hours to avoid business interruption

- Maintain updated disaster recovery plans for business continuity

Fadi’s perspective on the arms race: “AI will enhance the defense mechanism, but the question is: when we implement AI, are we ready for it? Because if you’re not ready, something goes wrong always.”

Round Two: Battlefield-Specific Challenges

Securing Borderless Infrastructure When Code Is the Person

Fadi tackled the challenge of autonomous AI agents moving freely through networks. His prescription:

1. Zero trust as the foundation – Always verify, never assume 2. Short-lived credentials – Not long-lived credentials that create persistent vulnerabilities

3. AI agent identity management – Each agent must have a verified identity tracking what it does, sees, and shares 4. Kill switches – Manual override capability when AI executes unauthorized code 5. Comparable models and tools – Multiple validation systems 6. Rule-based AND behavior-based restrictions – Dual-layer control mechanisms

“The AI agent should always be looked over through the identity—what it does, what it should do, what it should see, and what it should share,” Fadi emphasized, noting the lethal risk of AI accessing and sharing data without proper constraints.

Shadow AI: Innovation Underground or Security Nightmare?

Rudy addressed the explosive challenge of shadow AI—employees using unvetted AI tools to move faster, creating unauthorized backdoors.

His counterintuitive solution: Don’t try to ban it. You can’t.

“I did something like that 20 years ago,” Rudy admitted. “If you kill innovation in an organization, employees will be frustrated and find alternative ways. This is shadow IT, shadow AI. Organizations get it wrong—you cannot ban it. It’s there. It will always remain.”

His approach: The Sandbox Freedom Environment

Instead of driving innovation underground, create approved AI environments with clear boundaries:

- Data boundaries are clear

- Access is transparent

- It’s freedom, not surveillance

- In a controlled environment

“Do whatever you want there, but give us reporting. Let us learn. Most initiatives that go underground have no reporting—I never learn what’s happening. I’m changing this to get the output, learn, and put it back in the enterprise.”

Rudy’s rule of thumb for C-suites: “If employees are faster than your policy cycle, guess what? The policies are obsolete.”

This is the current reality with AI. Organizations need agility in both delivery and policy creation. “Governance is not there to police. Governance is there to enhance the environment in a very subtle way so everyone knows it’s a tool to drive innovation and enable, but with the guardrails we need.”

Security by Design: Speed AND Safety

Ella challenged the false choice between innovation speed and security: “Sometimes it may seem like you have to choose between speed and safety and fail at both. But the real insight is that security by design doesn’t compete with innovation—it accelerates it.”

How? Governance clarity eliminates rework. Automation compresses timelines. Stage rollouts catch problems at 1% scale instead of 100%.

Her three-layer approach:

- Work with people – Lean management and change management for buy-in

- Guide through governance first, then architecture – Bridge legacy and modern systems

- Deploy with safety gates – Continuous monitoring, transparency, and close analytics

The payoff is measurable. Ella cited 2025 consulting reports from McKinsey and the World Economic Forum: Organizations using this three-layer approach report almost 30% gains from automation, with incident response times measured in minutes rather than hours.

“To sum up, we don’t need to choose between security and speed. We go from the foundation—governance, architecture, deployment everywhere with control, people controlling the sequence, and onboarding with learning curves. Success will be around the corner when you have clarity and transparency.”

Identity in the Age of AI Agents

Clen tackled perhaps the most profound challenge: verifying identity when the “person” requesting access is code that can be perfectly spoofed in milliseconds.

His starting point: Accept that identity is no longer purely human.

“We have to accept the fact that identity layer is now beyond humans,” Clen stated. “It’s now shared by applications, by machines, and machine identity is very, very critical.”

He pointed to Privileged Access Management (PAM) as an example of this evolution. Traditional PAM focused on RDP, SSH, and web access—now called “legacy PAM.” The new concept: Modern PAM with zero standing privileges and app-to-app permissions.

AI’s detection capabilities are demonstrating their power: AI solutions have detected vulnerabilities in SQLite that were hidden for over 20 years, undetected by traditional fuzzing methods. “That’s when you see the capability of AI—the speed is just unmatched.”

For continuous verification, new frameworks are emerging:

- SPIFFE and Spire – Using short-lived certificates and existing authentication layers

- Continuous authentication – Not authenticate once, but continuously prove legitimacy

Clen described a proof of concept with WSO2 and Microsoft involving booking agents: “Although they are part of the same app, when the user agent speaks to the booking agent, the booking agent still must verify it. There is segregation on the identity at the agent level, and they must continuously verify their authenticity before they can work with others.”

Rapid Fire Insights: Bold Predictions

Biometrics vs. Passwords

Clen’s answer: Biometrics. “Passwords are easy to crack and breach. Biometrics are more complicated in implementation.” When challenged about deepfakes, he acknowledged the cat-and-mouse game: “Liveness detection systems to detect deepfakes are also evolving.”

Brand Reputation = Cybersecurity Record?

Rudy’s answer: True. His reasoning was blunt: “I will not work with a company that has been hacked, that has been compromised. Very simply, I will not do that. I will not put my money, not spend anything. It’s reputation today.”

Speed vs. Security?

Ella’s answer: Security. “There is no speed without security. You can be as fast as possible, but to really contribute long-term, you need a safe environment. First the governance, then innovation and speed rise exponentially afterwards.”

Humans as the Weakest Link?

Fadi’s answer: True. “Humans always make errors. We are made to make errors and fail. The AI corrects human error.”

Rudy’s addition: “A human will always be accountable and is the weakest point. But AI could also be the weakest point—it will be the strongest, but also the weakest. We must balance, balance, balance.”

Clen’s critical question: “When you offload everything to AI and AI makes a mistake that causes business disruption, who’s accountable? The AI? The developer? The person who used it? Your company? This is a very interesting topic.”

Trillion-Dollar Cyber Attack Before 2030?

Unanimous answer: Yes. All four panelists agreed this milestone will be reached before the decade ends.

Looking to 2030: The Smartest Moves We Must Make Now

Clen: The Partnership Between Human and AI

“By 2030, it’s not about who has the power or what model is strongest—it’s the partnership between human and AI.”

He used Security Operations Centers (SOCs) as an example: analyst burnout is very real due to overwhelming incident volumes. AI is simultaneously the solution and the cause—attackers leverage AI creating more pressure, while analysts can use AI for quick decisions.

The key distinction: “Do we reach full autonomy? No. Humans always have to be in the loop—it’s human ON the loop rather than human IN the loop.”

Clen’s vision: Routine tasks must be autonomous. Irreversible tasks must always remain within human control. This partnership is the key for the future.

Rudy: Commoditize AI, Preserve Human Judgment

“By 2030, I think the discussion of AI won’t be here anymore—it will be something else. But our human judgments will always remain key. It will not be commoditized.”

Organizations that will thrive:

- Open innovation through smart sandboxing

- Preserve human override authority

- Have the latest research to challenge the algorithms

- Reward employees working in 24/7/365 SOC environments

Rudy’s critical framework: Create a map showing where AI is taking over, then identify how humans remain involved and who owns each piece. “We will be ruled by machines in the future, so how can humans stay valid in this loop?”

Ella: Map Decision Rights on a Single Page

Ella’s advice for C-level executives: “Pick your 5-10 most critical AI-driven decisions—where money moves, people are hired, machines are controlled—and map the decision rights on a single page for each.”

Write it down in plain language:

- The decision

- The role of AI

- The role of humans

- The escalation triggers

- The exact way a human can override the system

“Your AI suddenly comes from being magic to reality—just a tool like Excel. It’s no longer powerful above humans. It’s just a tool which humans operate, not something operating above them.”

Ella’s conclusion: “AI won’t replace humans. It’s just a new normal.”

Fadi: Align With the AI Shield

“Companies and organizations should adapt—not just adapt, but adapt under the pressures of governance. All companies should comply and follow standards and regulations to align with the AI shield to build very strong defense infrastructure.”

He emphasized the CIA triad (Confidentiality, Integrity, Availability) as the foundation for every AI implementation in defense.

Most importantly: “Human always has to supervise the AI shield. Always human has to be in the picture.”

Key Takeaways for 2026 and Beyond

1. The Attack Surface Has Exploded

Only 18% is currently covered by AI-driven defenses. The remaining 82% represents urgent unaddressed risk.

2. Governance Is Not Policing

Create sandbox environments where innovation can flourish with guardrails, not underground where you have no visibility or control.

3. Speed Without Security Fails

Security by design doesn’t slow innovation—it accelerates it by eliminating costly rework and catching problems at 1% scale.

4. Identity Must Be Continuously Verified

In a world where AI agents request access, authentication cannot be a one-time event—it must be continuous at every interaction.

5. Human ON the Loop, Not IN the Loop

Routine tasks should be autonomous. Irreversible decisions must remain under human control with clear override mechanisms.

6. Shadow AI Cannot Be Banned

Organizations must provide approved environments for experimentation rather than driving innovation underground where security cannot monitor or learn from it.

7. Kill Switches Are Non-Negotiable

Every autonomous AI agent must have manual override capability when it executes unauthorized actions.

Conclusion: The Question Isn’t If Machines Are Coming—It’s Whether We’re Ready to Lead Them

As Naman concluded: “AI is the weapon, but the human is still the architect.”

The panel consensus was unambiguous: by 2030, organizations that survive and thrive will be those that master the partnership between human judgment and AI capability. They will commoditize AI while preserving irreplaceable human override authority. They will map decision rights clearly, create governance frameworks that enable rather than police, and build infrastructure where humans remain supervisors, not spectators.

The arms race is real. The trillion-dollar cyber attack is coming before 2030. But the defender’s advantage exists for those mature enough to implement zero-trust frameworks, short-lived credentials, behavioral drift detection, explainable AI, and—most critically—the wisdom to know when humans must step in.

As the session closed, the roadmap was clear: The question isn’t whether the machines are coming. It’s whether you are ready to lead them.

This Open Innovator Knowledge Session provided a survival manual for the AI arms race. Huge appreciation to the expert panel—Clen C Richard, Rudy Shoushany, Ella Türümina, and Fadi Adam—for their candid insights on defending against autonomous threats while scaling AI safely and strategically.